When global circumstances required our team to go completely remote, we knew things would be tough. Team members wouldn’t just be working from home; they’d be working from home during a time of intense fear and uncertainty, with a myriad of new concerns and distractions. We expected that engineering activity would decline as a result, and we were understanding — as our VP of Engineering, Ale Paredes, explained during a panel on working remotely through the crisis, “We’re not trying to behave as if it’s business as usual, because it’s not business as usual.”

But when Ale checked the team’s productivity metrics in Velocity, our engineering analytics platform, she was surprised by what she found. After we made the switch to a distributed workflow, many engineers actually started working more. Still, despite logging more time in the codebase, they were getting less done.

To find out why the team wasn’t making progress, Ale dug deeper into the data. Not only did she find answers, she used that information to develop better ways to support the team.

Engineering Metrics Served as a Diagnostic Tool

Ale and the engineering team regularly track certain key metrics in Velocity, using data about Pull Request Throughput, Cycle Time, and Incidents to get a sense of how they’re doing. They review this information on a bi-weekly basis, so they can address red flags as early as possible or replicate processes that are having a positive impact.

When something appears to be trending in a concerning direction, the team drills down deeper into the data, then uses that information as a starting point for troubleshooting conversations.

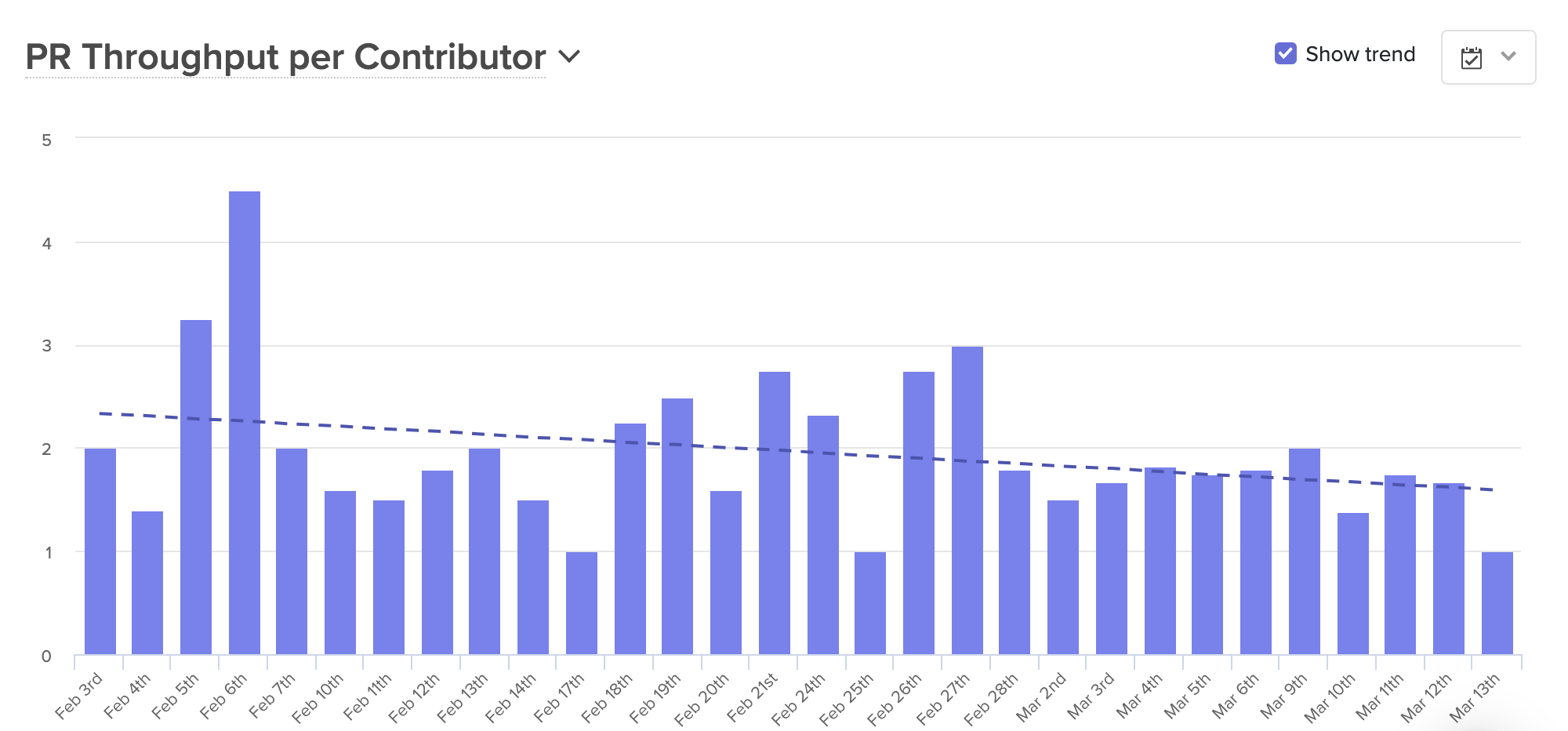

After the team transitioned to a fully-distributed workflow, they noticed that their Pull Request Throughput was significantly lower than usual.

Knowing that the team was merging fewer Pull Requests than expected, Ale took a closer look at the stages of the coding process to find out why. This investigation included a look at:

- Time to Open: The time between a developer’s first commit and the opening of an associated Pull Request. If the team simply wasn’t pushing commits in the first place, it could be a sign that their attention was focused elsewhere, or that they were being sidelined by anxiety or emotional stress. If developers were coding but not opening PRs, they could be confused about project specifications.

- Time to First Review: The period between when a Pull Request is opened, and when it’s picked up for review. A slowdown here could mean that physical distance was causing engineers to focus on their own work and deprioritize assisting their teammates.

- Time to Approve: The period of time a PR spends in the code review process. If PRs were getting stuck in code review, it could indicate a lack of alignment between members of the team.

- Time to Deploy: The actual deployment process. A holdup at this phase could be the result of technical issues or a lack of confidence in new code.

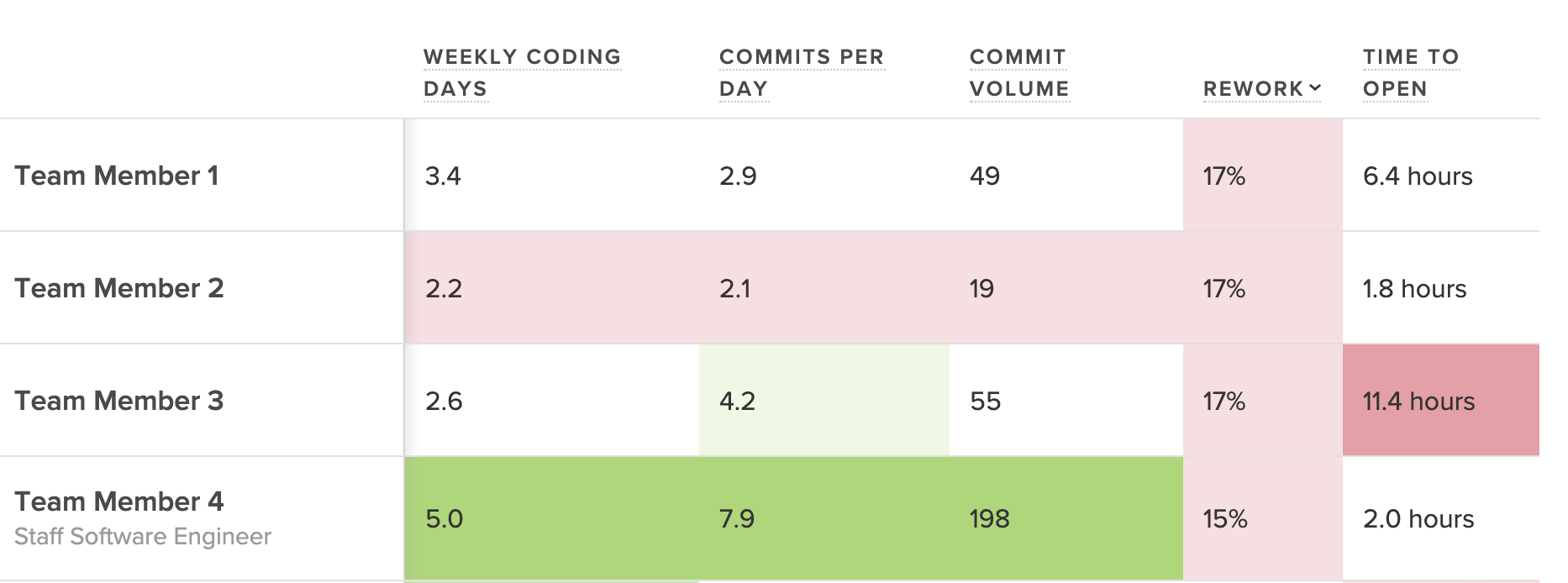

As she dug into the metrics, Ale saw that Time to Open was the source of the slowdown. The engineering team was clearly working — they were pushing commits, and actively coding — but they were completing a higher than usual percentage of Rework. Something was keeping the team writing and rewriting the same pieces of code, which concerned Ale. “Beyond the waste itself, which is not great, I worried that if we didn’t address the issue right away, it could impact team morale.”

Improved Communication Helped Decrease Levels of Rework

With this information, Ale approached the engineering team’s squad leads “to try to understand what was blocking the team, and to identify how we could work together to create a process that was more streamlined.” Through these conversations, Ale discovered that individual developers were getting hung up as a result of miscommunications and unclear specs.

Though the team was having regular remote meetings, developers still lacked the information they needed to do their work. “People didn’t have the same amount of context,” Ale found. “We used to rely on the fact that we were all together in the office so that if I had something to say, the person next to me would hear it…our team is small enough that usually, everyone on the team has context.”

Without a process in place for sharing this big-picture information, engineers were getting left in the dark. They didn’t always know how their work fit into the project as a whole, so they were making assumptions, some of which were incorrect. At the same time, “we noticed that people were using direct messages to communicate, so everyone had slightly different bits of information,” and developers were forced to continually revise their work as new information came to light.

Armed with these realizations, Ale and the team were able to create new processes to combat misinformation and enhance transparency:

- They created systems for intentional public communication. Discussions were moved to a team public Slack channel, with particularly complex conversations happening in groups over Zoom. Decisions were logged in Jira, with each engineer responsible for adding relevant comments to any issues they own, so the rest of the team could reference them when needed.

- They leaned more heavily on documentation. For each new feature, the team worked with Product to draw up a detailed spec document. These documents were then discussed at kickoff meetings for each new track of work so that everyone could see the details and ask questions. For projects that required more in-depth technical design, engineers would write a Request for Comment document and store it in markdown format in GitHub, so teammates could leave comments as part of the code review process.

- They wrote down and shared their plans. Each week, the team wrote down their plans for the next few days and shared them with the rest of the team.

- They fostered a culture of sharing. Though she’s always done it, Ale made a continued effort to encourage senior engineers to use the team public Slack channel to discuss challenges and think through solutions, so that team members feel safer raising questions and admitting confusion.

With the new processes in place, the team kept an eye on the metrics to evaluate their success.

The Productive and Human Benefits of Increased Transparency

The new processes helped increase transparency, reduce confusion, and boost productivity.

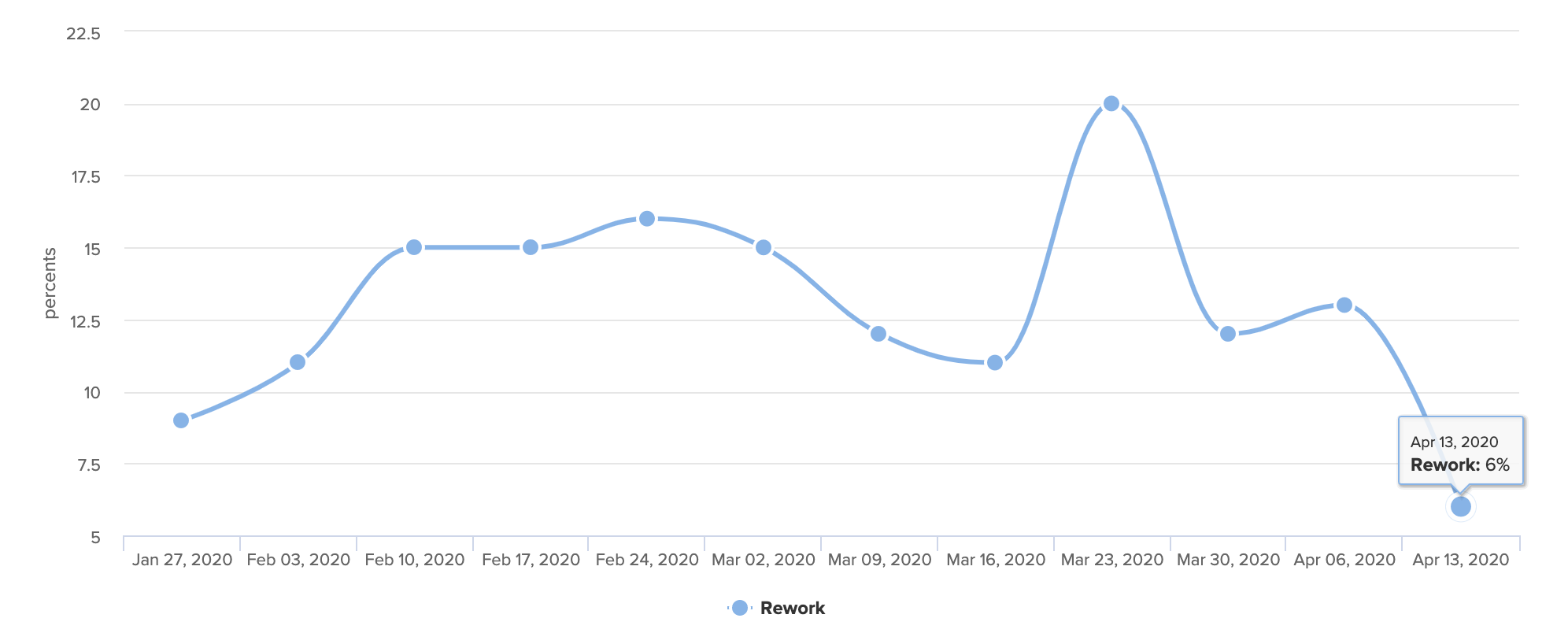

In a span of four weeks, Rework fell from a high of 20% to just 6%.

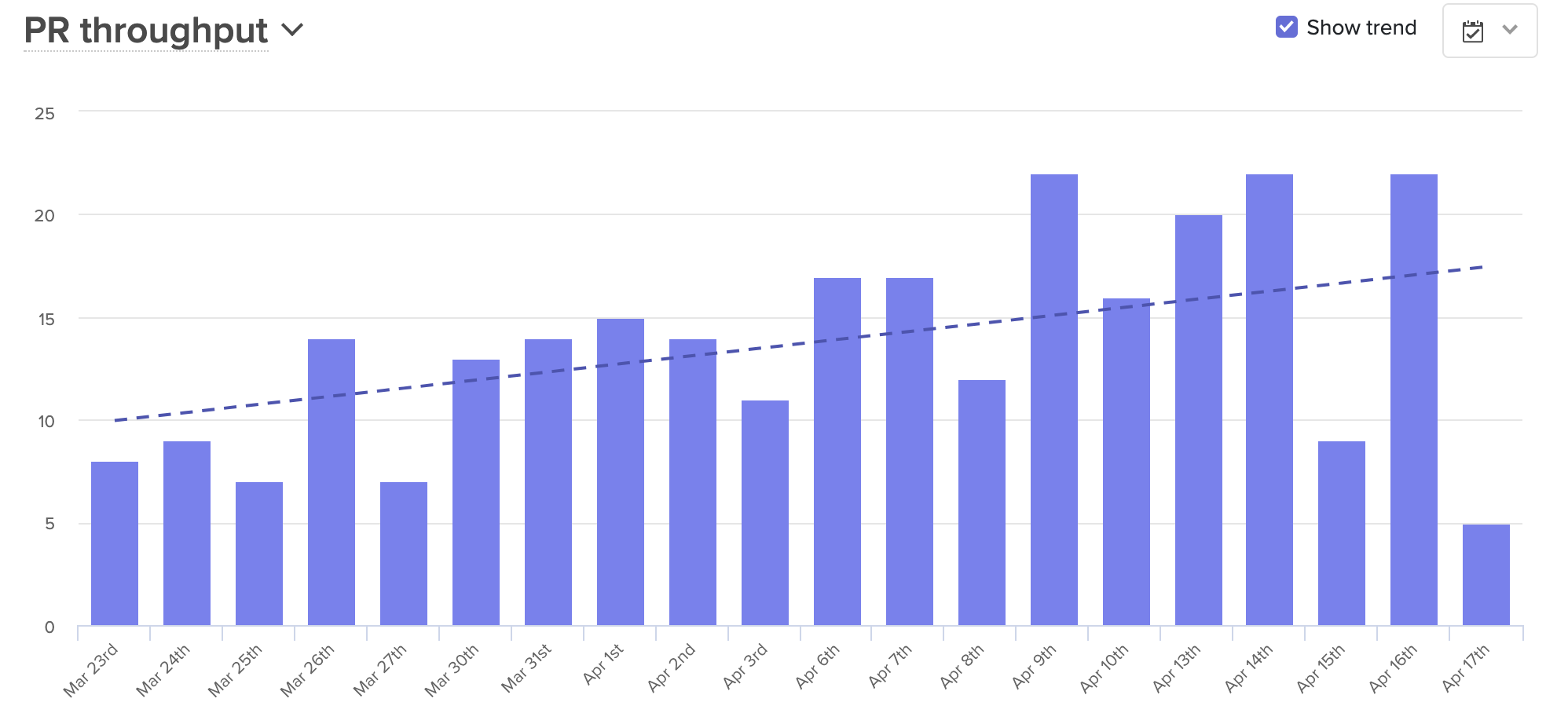

Over that same period, average PR Throughput went up almost 70%.

But most importantly, engineers are now less confused and are finding more opportunities to collaborate. The emphasis on documentation and written plans offer the opportunity to ask questions at every stage of the process. And with everyone looped in, developers can lend a hand to people on other teams, something that frequently occurred when everyone was together in the office.

Writing things down also has an unforeseen benefit — it’s helping the team avoid unnecessary meetings. While the team is remote, they’re using that extra time to connect on a more human level, building time into each standup to check in with each other, and carving time out of retros to talk about how they’re coping with the current situation. As Ale explained, “We were able to adapt our last retro to how we were feeling, instead of just focusing on the product process. We understood that was not what we needed to be talking about right now, that we need to talk about how we’re feeling and how we’re coping.”

Ale expects the team will continue to see benefits from these new processes, even once they return to the office. Alignment has increased, communication has improved, and enhanced documentation will make it easier to onboard new team members. Plus, when the team reverts to their partially-distributed structure, they’ll do so with a greater sense of unity. As Ale explained, “We now have a lot more empathy for our remote team members.”

If you’d like to learn more about how engineering analytics can help you support your team, watch our free, 45 minute live webinar with Code Climate Co-Founder Noah Davis. This webinar focuses on how use engineering data from Velocity to improve the way you run remote meetings.

Get articles like this in your inbox.

Trending from Code Climate

1.

Engineering Leaders Share Thoughts on Leadership in Disrupted Times in a New Survey

For engineering teams, disruption to the business can have a significant impact on the ability to deliver and meet goals. These disruptions are often a result of reprioritization and budget changes on an organizational level, and are amplified during times of transition or economic instability.

2.

Built In’s 2023 Best Places to Work — Why Code Climate Made the List

At Code Climate, we value collaboration and growth, and strive for greatness within our product and workplace. For us, this means fostering a supportive, challenging, people-first culture. Thanks to an emphasis on these values, we’ve earned spots on three of Built In’s 2023 Best Places to Work awards lists, including New York City Best Startups to Work For, New York City Best Places to Work, and U.S. Best Startups to Work For.

3.

Turnkey Deployment Delivers Day-One Value for Yottaa

Learn how Yottaa gained immediate value from Code Climate Velocity right out of the box.

Get articles like this in your inbox.

Get more articles just like these delivered straight to your inbox

Stay up to date on the latest insights for data-driven engineering leaders.